Orb: VR experience

Post by Mike Daly, Creative technologist and DX Lab Digital Drop-In.

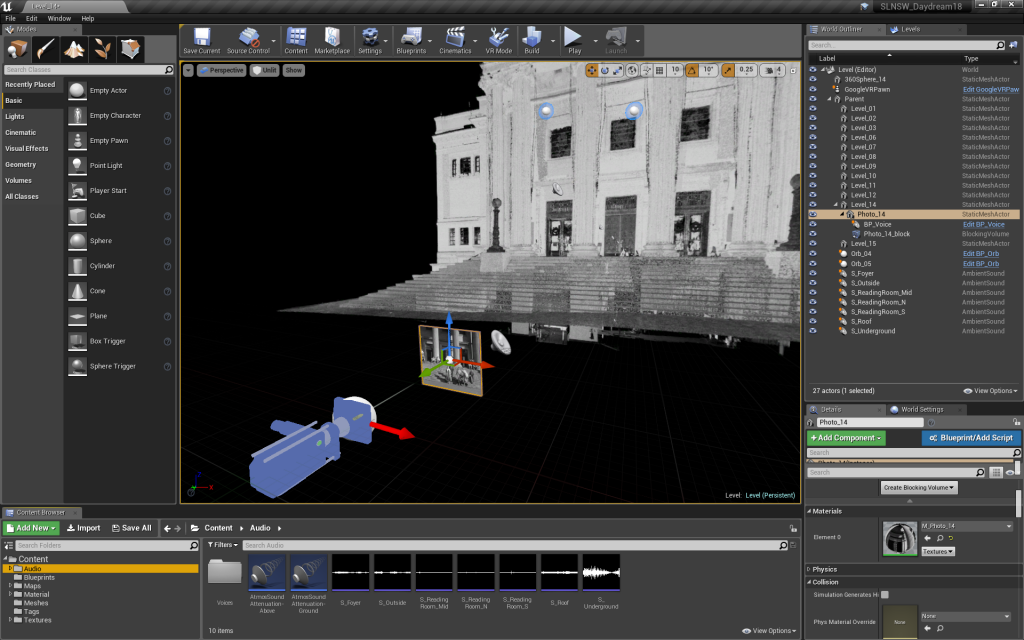

Orb is an interactive VR experience for high school students visiting the State Library of NSW Learning Services team. The project is based on the DX Lab experiment Field of View, which was made as part of a 2018 Digital Drop-In residency.

We’ve wanted school groups visiting the Library to be able to experience Field of View, however the original version was designed for a gallery installation and runs on a large high-powered desktop computer. We needed a version of the experience that was usable by multiple students at once, and therefore affordable, robust, simple to use and mobile.

The Library already owned a large number of Daydream VR devices — a VR technology that uses mobile phones installed in relatively affordable headsets. Although the Daydream platform is no longer supported by Google, it was embraced for the project in the name of sustainable design — to continue giving life to abandoned hardware.

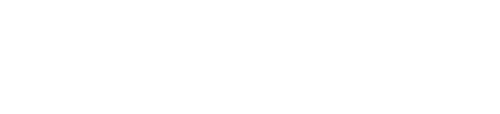

However the experience couldn’t be simply ported over, as the mobile phones aren’t able to render a point cloud with 15 million points in real-time. We needed a different approach, so I pre-rendered the experience as fourteen 360-degree still images (an image that surrounds you on all sides), which the user navigates between using the Daydream controller.

The solution answers the design requirements, but it also subtly alters the user experience. The user is limited to the 14 locations they can travel to within the point cloud, however this reduces the chance of getting lost within the experience and it keeps the focus on the photographs.

Because the point cloud is rendered into a sphere that surrounds you, the sense of depth is reduced, but not as much as I had initially feared. The user navigates the experience by clicking on pink floating orbs. At first I rendered these into the 360-degree image along with the point cloud, but doing so made them too pixelated. By depicting them as actual 3D geometry they look much sharper, I was able to animate them, and the sense of depth is improved.

The phone in a Google VR headset has three degrees of freedom (3DoF), which means it can track rotational movement but not position (like 6DoF can). However Unreal Engine allows you to place the virtual camera on a ‘neck model’ which emulates the lateral motion that your eyes make when tilting your head side to side. Although the effect is subtle, it’s very effective.

After an initial prototype was built, the experience was tested with students who gave valuable feedback. This included:

- provide audio commentary on what I am seeing

- a narrative of the Library would be good

- I need orientation, where am I?

- I want to know where I have been before and what I have clicked on

- the circles could change colour when I have visited them

- it could be a ‘choose your own story’ narrative.

This feedback was used to design and develop the final result. One of the key differences to the original experience is the inclusion of a narrator, whose voice guides the user through the journey, giving context and background.

Orb will be available to students as part of the Learning Services onsite programming. For more information about Orb please contact learning.library@sl.nsw.gov.au